84% of organisations are planning to increase their investment in voice AI, and the market is expected to grow rapidly in the coming years. Yet, while demos make it look simple, actually building a reliable AI voice agent often turns out to be far more complex than expected. Teams run into challenges with choosing the right architecture, managing latency, and connecting multiple technologies smoothly.

If you're wondering how to build an AI voice agent, create one without coding, or understand the full technical process behind it, this guide is designed to walk you through every step. Whether you want to build a AI voice agent from scratch, explore no-code tools, or evaluate the right approach for your business, you'll find clear and practical answers here.

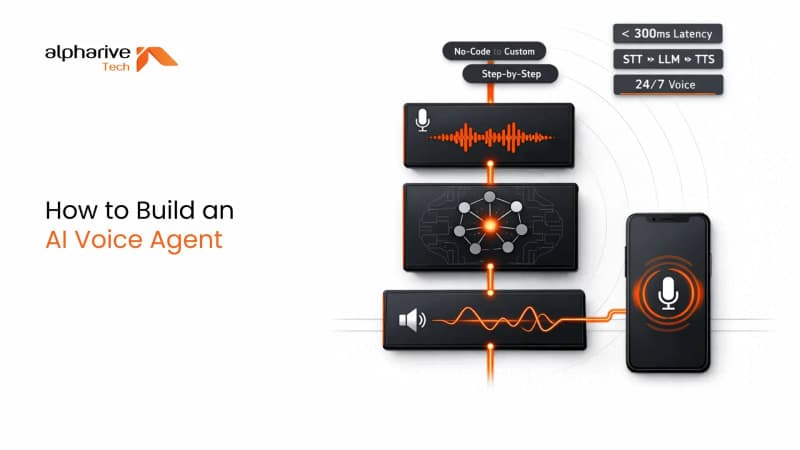

In this blog, we'll cover the three main ways to build an AI voice agent, explain the complete technology stack including STT, LLM, and TTS, and guide you through a step-by-step process from planning to deployment. You'll also get a realistic view of timelines, costs, tools, and common mistakes so you can make informed decisions before you start.

What Is an AI Voice Agent?

An AI voice agent is a software system that can understand spoken language, process it, and respond back in a natural human-like voice. Unlike traditional IVR systems that follow fixed scripts, these agents use artificial intelligence to handle real conversations, making them more flexible and context-aware. They can answer questions, complete tasks, and even hold multi-turn conversations without sounding robotic.

At the same time, AI voice agents are becoming a core part of modern business operations across industries. From handling customer support calls to booking appointments or assisting users in real time, they reduce manual workload while improving response speed. What makes them powerful is not just their ability to speak, but their ability to understand intent and respond intelligently in dynamic situations.

The Three Technologies Behind Every AI Voice Agent

Every AI voice agent runs on a combination of three key technologies working together in a continuous flow. First, Speech-to-Text (STT) converts the user's voice into text within milliseconds. Then, a Large Language Model (LLM) processes that text to understand intent and generate a meaningful response. Finally, Text-to-Speech (TTS) converts that response back into natural-sounding audio for the user.

This pipeline happens almost instantly during a conversation, but even small delays at any stage can affect the overall experience. If the STT is slow, the system struggles to hear properly. If the LLM takes too long, responses feel delayed. And if the TTS lacks quality, the voice sounds unnatural. The balance between speed and accuracy across all three layers defines how smooth the interaction feels.

Two Architectures: Chained Pipeline vs. Speech-to-Speech

AI voice agent architecture plays a crucial role in how efficiently conversations are processed and delivered. The most common setup is the chained pipeline, where speech is first converted to text, then processed, and finally turned back into speech. This approach offers flexibility and control, allowing teams to choose the best tools for each layer.

On the other hand, speech-to-speech architecture uses a single model to handle both input and output directly in audio form. While this reduces latency and simplifies the flow, it often limits customisation and control over each component. Many real-world applications use a hybrid approach, combining both methods to balance performance, flexibility, and scalability depending on the use case.

Where AI Voice Agents Are Used Today

AI voice agents are already being used across a wide range of industries to automate interactions and improve user experience. In customer support, they handle high volumes of calls efficiently. In healthcare, they assist with appointment scheduling and patient queries. E-commerce platforms use them for order tracking, while banks rely on them for account-related assistance.

Beyond that, industries like hospitality, logistics, and education are also adopting voice agents to simplify operations and provide instant support. From hotel check-ins to field service coordination and language learning, the use cases continue to expand across industries. One of the most impactful examples today is the rise of AI voice agent for customer service, where businesses automate conversations while maintaining a human-like experience.

Before You Build: Define Your AI Voice Agent's Purpose

Before you build an AI voice agent, it's important to understand that most failures don't come from technology but from unclear direction. Many teams jump straight into tools and platforms without fully defining what the agent should actually do. This often leads to rework, delays, and solutions that don't deliver real value.

With this in mind, taking time to plan your AI voice agent properly sets the foundation for everything that follows. It helps you choose the right build approach, select the right tools, and avoid costly mistakes later. A well-defined purpose also makes it easier to measure success and improve the system over time.

Define Your Use Case and Target User

Start by clearly identifying the exact problem your AI voice agent will solve and who will be using it. Is it meant for customer support, internal operations, or something else entirely? Knowing whether your users are customers, employees, or patients shapes how the agent should behave and respond.

In addition, think about where and how users will interact with the agent. Will it be through phone calls, web apps, or mobile devices? Consider language preferences, accents, and scenarios where the agent may fail. Planning these details early helps create a more realistic and effective solution.

Set Your Success Metrics Before You Start

Setting clear success metrics for your AI voice agent ensures you know whether your solution is actually working or not. Without defined benchmarks, it becomes difficult to measure performance or justify further investment. Metrics bring clarity to both technical and business outcomes.

Common metrics include first-call resolution rate, average handling time, customer satisfaction scores, and overall response latency. You can also track how many queries are handled without human intervention. These indicators give you a complete picture of how well your voice agent performs in real-world scenarios.

Choose Your Build Path: No-Code, Low-Code, or Custom Development

Choosing the right AI voice agent build path is one of the most important decisions you'll make before starting development. Not every business needs a complex custom solution, and not every use case can be solved with no-code tools. The right choice depends on your team, timeline, budget, and the level of control you need.

In practice, AI voice agent development typically falls into three clear approaches: no-code, low-code, and fully custom development. Each path comes with its own trade-offs in terms of speed, flexibility, and scalability. Understanding these differences early helps you avoid overbuilding or limiting your solution.

Path 1 - No-Code: Build Without Programming

No-code platforms allow you to create an AI voice agent without writing a single line of code, making them ideal for non-technical users and fast experimentation. Tools like Voiceflow, Synthflow, and similar platforms offer visual builders where you can design conversations using drag-and-drop interfaces.

This approach works best for simple use cases such as appointment booking, basic customer support, or lead qualification. You can launch quickly, often within hours or days, but you may face limitations when it comes to deep integrations or advanced customisation as your needs grow.

Path 2 - Low-Code: The Middle Ground

Low-code development offers a balance between ease of use and flexibility, making it suitable for teams with some technical knowledge. Platforms like VAPI, Pipecat, and Botpress allow you to customise workflows while still providing pre-built components that speed up development.

With low-code, you can build more dynamic and scalable voice agents compared to no-code solutions. It gives you better control over integrations, logic, and performance without requiring a full engineering effort. This path is often chosen by startups and product teams looking to move fast while keeping room for growth.

Path 3 - Custom Build: Full Control from the Ground Up

Custom development is the most powerful approach to building an AI voice agent, offering complete control over every component of the system. This involves selecting and integrating technologies like STT engines, LLMs, TTS systems, and telephony infrastructure based on your exact requirements.

This path is ideal for businesses with complex workflows, high call volumes, or strict compliance needs. While it takes more time and investment, it allows you to build a highly tailored solution that fits perfectly with your systems and delivers a more refined user experience.

How to Choose the Right Path for Your Business

Choosing the right AI voice agent approach depends on aligning your business needs with the capabilities of each development path. If you need a quick solution with minimal effort, no-code is a strong starting point. If you want flexibility without full complexity, low-code offers a balanced option.

On the other hand, if your requirements involve deep integrations, scalability, or unique workflows, a custom build is often the better choice. Evaluating your team's technical skills, project timeline, and long-term goals will help you make a confident and practical decision.

How to Build an AI Voice Agent: Step-by-Step Process

The AI voice agent step-by-step process brings together planning, tools, and execution into a clear path from idea to deployment. Each step focuses on a specific layer of the system, helping you build a solution that is both functional and scalable.

Step 1: Choose Your Speech-to-Text (STT) Engine

The first step in building an AI voice agent is selecting a reliable Speech-to-Text engine that can accurately convert spoken input into text in real time. This choice directly impacts how well your system understands users, especially across different accents, languages, and speaking styles.

When evaluating STT options, focus on latency, accuracy, and language support. Some tools are better suited for real-time conversations, while others perform well in asynchronous environments. Choosing the right balance ensures your voice agent responds quickly without compromising understanding.

Step 2: Select Your LLM - The Brain of Your Agent

Once speech is converted into text, the next step is choosing a Large Language Model that can process the input and generate meaningful responses. The LLM acts as the brain of your AI voice agent, determining how intelligently it can understand context and respond.

Different models offer varying strengths in terms of speed, cost, and reasoning ability. Some are optimized for fast interactions, while others provide deeper contextual understanding. Your choice should align with the complexity of conversations your agent is expected to handle.

Step 3: Choose Your Text-to-Speech (TTS) Voice Engine

After generating a response, the system needs to convert text back into speech using a Text-to-Speech engine. This is where the voice quality of your AI agent is defined, making it a critical part of the user experience.

High-quality TTS engines produce natural-sounding voices with proper tone, pacing, and clarity. Factors like multilingual support, voice customisation, and streaming capabilities play an important role in creating a more human-like interaction.

Step 4: Design Your Conversation Flow

Designing the conversation flow is where your AI voice agent starts to feel truly intelligent and user-friendly. Many teams use a mindmap generator to map user intents, responses, and fallback scenarios before building the logic. This involves mapping out user intents, possible responses, and how the system should behave in different scenarios.

It's important to plan for edge cases, interruptions, and fallback responses when the agent cannot understand the user. A well-designed flow ensures smooth interactions and avoids frustrating or repetitive experiences for users.

Step 5: Integrate with Your Business Systems

To make your AI voice agent useful in real-world scenarios, it needs to connect with your existing business systems. This includes CRM platforms, customer support tools, databases, and communication channels.

These integrations allow the agent to access real-time information, perform actions like booking appointments, and provide personalised responses. Without proper integration, even a well-built voice agent remains limited in its capabilities.

Step 6: Test, Optimise, and Handle Edge Cases

Testing is a crucial phase where you evaluate how your AI voice agent performs under real-world conditions. This includes checking response accuracy, latency, and how well the system handles unexpected inputs.

You should test across different accents, speech speeds, and environments to ensure reliability. Continuous optimisation based on real interactions helps improve performance and refine the overall experience over time.

Step 7: Deploy and Monitor Performance

Once everything is tested, the final step is deploying your AI voice agent into a live environment where users can interact with it. This typically involves cloud deployment and setting up monitoring systems to track performance.

After deployment, ongoing monitoring becomes essential to identify issues, measure success metrics, and continuously improve the system. A well-maintained voice agent evolves over time, adapting to user needs and delivering better results with each update.

AI Voice Agent Tech Stack: Complete Tools & Platforms Compared

The AI voice agent tech stack includes all the tools and platforms required to process speech, generate responses, and deliver natural voice output in real time. Choosing the right combination of technologies is essential because each layer directly impacts performance, cost, and user experience.

In most cases, this stack is built using three core components: Speech-to-Text for understanding input, a Language Model for processing logic, and Text-to-Speech for delivering responses. Alongside these, orchestration platforms help connect everything smoothly, making the system easier to manage and scale as your needs grow.

STT Tools Compared: Accuracy, Latency & Cost

Speech-to-Text tools form the foundation of any AI voice agent by converting spoken language into text quickly and accurately. The right STT engine ensures your system understands users clearly, even in real-time conversations with varying accents and speech patterns.

Common options include Deepgram for low latency performance, OpenAI Whisper for high accuracy, AssemblyAI for customisable processing, and Google STT for broad language support. Each tool varies in cost, speed, and language capabilities, so the choice depends on your specific use case and scale.

LLM Options: Which AI Model to Use for Voice?

Large Language Models power the intelligence of your AI voice agent, enabling it to understand context, generate responses, and manage conversations effectively. Selecting the right model impacts how natural and helpful your agent feels during interactions.

Popular choices include GPT-4o for balanced performance, Claude for strong reasoning, Gemini for speed and integration, and Llama models for open-source flexibility. The decision often depends on factors like response time, cost efficiency, and the complexity of conversations your agent needs to handle.

TTS Engines Ranked: Which Voice Sounds Most Natural?

Text-to-Speech engines are responsible for turning generated text into human-like speech, shaping how users perceive your AI voice agent. A natural and clear voice can significantly improve user engagement and trust.

Leading options include ElevenLabs for highly realistic voices, Cartesia for fast streaming output, OpenAI TTS for tight integration, and Google WaveNet for reliable multilingual support. The best choice depends on voice quality, latency, and the level of customisation you need.

Orchestration Platforms: Where It All Comes Together

Orchestration platforms act as the glue that connects all components of your AI voice agent into a seamless workflow. They manage real-time communication between STT, LLM, and TTS systems while handling integrations and logic.

Tools like VAPI, Pipecat, Voiceflow, Retell AI, and telephony providers such as Twilio or Telnyx help streamline development and deployment. Choosing the right orchestration layer simplifies complexity and allows you to focus more on building meaningful user experiences.

How Long Does It Take? Timeline and Cost to Build an AI Voice Agent

AI voice agent cost and timeline are often the first questions businesses ask before starting development. While the technology may seem straightforward at a glance, the actual effort depends on your chosen approach, level of complexity, and integration requirements.

Understanding both time and cost upfront helps you plan better and avoid unrealistic expectations. Whether you're building a simple prototype or a fully integrated enterprise solution, having a clear estimate allows you to allocate resources effectively and move forward with confidence.

Build Timeline by Approach

The timeline for building an AI voice agent varies significantly based on the development path you choose. No-code solutions are the fastest, allowing you to launch a basic agent within a few hours to a couple of days. These are ideal for quick testing or simple use cases with minimal complexity.

Low-code development typically takes between one to four weeks, depending on the level of customisation and integrations required. On the other hand, fully custom-built AI voice agents can take anywhere from 8 to 24 weeks, especially when dealing with complex workflows, multiple systems, and compliance requirements.

How Much Does It Cost to Build an AI Voice Agent?

The cost of building an AI voice agent depends on factors like tools, infrastructure, and the scale of deployment. No-code platforms are the most affordable, usually ranging from $0 to $500 per month, including subscriptions and API usage costs.

Low-code solutions often require an initial setup investment between $2,000 and $15,000, along with ongoing monthly expenses. For custom-built solutions, costs can range from $15,000 to $150,000 or more, depending on integrations, performance needs, and compliance standards. Every project is different, so getting a tailored estimate based on your specific requirements is always the smarter next step.

Common Mistakes When Building an AI Voice Agent (And How to Avoid Them)

AI voice agent mistakes are often the hidden reason why many projects fail even after using the right tools and technologies. While building the system may seem straightforward, small oversights in design, planning, or testing can lead to poor user experience and low adoption.

Looking at common pitfalls early helps you avoid costly rework and ensures your voice agent performs reliably in real-world conditions. These mistakes are not technical limitations but strategic gaps that can be fixed with the right approach from the start.

Mistake 1: Ignoring Latency Until It's Too Late

One of the biggest issues in AI voice agents is latency, which directly affects how natural a conversation feels. Even if each component works well individually, delays add up across STT, LLM, and TTS, making responses feel slow and unnatural. To avoid this, measure end-to-end latency from the beginning rather than focusing on individual components. Optimising the entire pipeline ensures faster responses and smoother interactions, which are critical for maintaining user engagement.

Mistake 2: No Clear Human Escalation Path

AI voice agents cannot handle every scenario perfectly, and there will always be situations where human intervention is required. Without a proper escalation path, users can feel stuck or frustrated when the system fails to understand them. Planning a seamless handoff to human agents ensures continuity and builds trust. This includes defining when escalation should happen, what information is passed along, and how the transition is communicated to the user.

Mistake 3: Prioritising Tech Over Conversation Design

Focusing only on technology while ignoring conversation design is a common mistake that leads to poor user experience. Even with the best tools, a poorly structured conversation can feel robotic and confusing. A well-designed interaction considers tone, pacing, interruptions, and context. Investing time in conversation design ensures the agent feels natural and intuitive, rather than just technically functional.

Mistake 4: Skipping Accent and Language Testing

Many AI voice agents perform well in controlled environments but struggle with real-world variations in speech. Differences in accents, dialects, and language usage can significantly impact accuracy and user satisfaction. Testing with diverse user groups helps identify these gaps early. Incorporating multilingual support and domain-specific vocabulary improves understanding and makes the system more inclusive and reliable.

Mistake 5: No Plan for Ongoing Optimisation

Launching an AI voice agent is not the end of the journey it's just the beginning. Without continuous monitoring and improvement, performance can decline over time as user behaviour and expectations evolve. Setting up regular review cycles, tracking performance metrics, and updating prompts or models ensures your system stays effective. Ongoing optimisation keeps the experience fresh and aligned with user needs.

Build Your AI Voice Agent with Alpharive

AI voice agent development is not just about connecting tools it's about making the right decisions at every stage, from architecture to conversation design. The difference between an agent that users trust and one they abandon often comes down to how well these details are handled. That's where experience plays a major role.

Alpharive, a AI agent development company the focus is on delivering AI voice agent solutions that are not only functional but also reliable and scalable. From helping businesses choose the right build path to handling full-scale custom development, each project is approached with clarity and precision. The process covers everything from STT, LLM, and TTS selection to integrations, testing, and deployment, ensuring a smooth journey from idea to execution.

Beyond the initial build, continuous optimisation is treated as an essential part of the solution. Voice agents evolve with usage, and improving performance over time is key to long-term success. Whether it's refining conversation flows, improving response accuracy, or scaling the system, ongoing support ensures consistent results.

For businesses looking to build or scale their AI voice agent, working with a team that understands both technology and real-world use cases makes a clear difference. Instead of navigating complexity alone, having the right partner can simplify the process and accelerate outcomes. Talk to our experts and automate your business.